Maintaining large Domino databases can be a challenge. It can take considerable time and require significant additional storage.

When maintaining large Domino databases, the first thing to do is to get a good idea of why the databases have become so large. You can then consider mitigation steps like:

- Deleting any documents you no longer need.

- Implementing attachment compression

- Implementing DAOS on a separate drive

- Implementing document compression

- Moving full-text indexes to a separate drive. Are attachments been indexed? If so, do they need to be?

- Removing any unused views

- Making sure the views do not contain any dynamic formulas, for example, @today. When a view selection formula uses @Today, it can cause the view index to be rebuilt every day, which can lead to a significant decrease in performance. This is because the view index has to be rebuilt every time the selection formula changes

- If possible, avoid using readers and authors fields

- Archiving older documents

On modern versions of Domino, HCL recommends using DBMT for database management, and we have fully automated its operation in our Domino Optimizer product.

The Domino Optimizer allows you to automate many of the mitigation steps outlined above, but consideration should be given when dealing with large databases.

The Domino Optimizer calls DBMT to do many of the database operations, so remember that DBMT will take a database offline when it is working on it. Ensure your databases are in a cluster and give consideration to when any scheduled agents may be running. DBMT is cluster-aware, so it should not take multiple replicas of the same database offline at the same time.

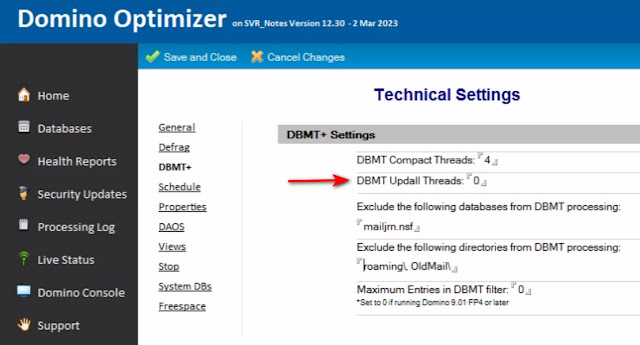

When running The Optimizer on a server with large databases, we now recommend you leave the Updall process in place and allow it to maintain views and full-text indexes. Turn off this functionality in DBMT by setting the DBMT Updall threads to zero

When you first schedule the Optimizer, don't turn on multiple space-saving features at the same time. Allow the Optimizer to 'bed in' over a period of time and then slowly enable features to maximise space savings.

Remember that it took ages to grow the databases to be truly massive and so it is going to take a little while to optimize them.

As always, we are here to help. Let us know your thoughts and if you have any questions or concerns.

No comments:

Post a Comment